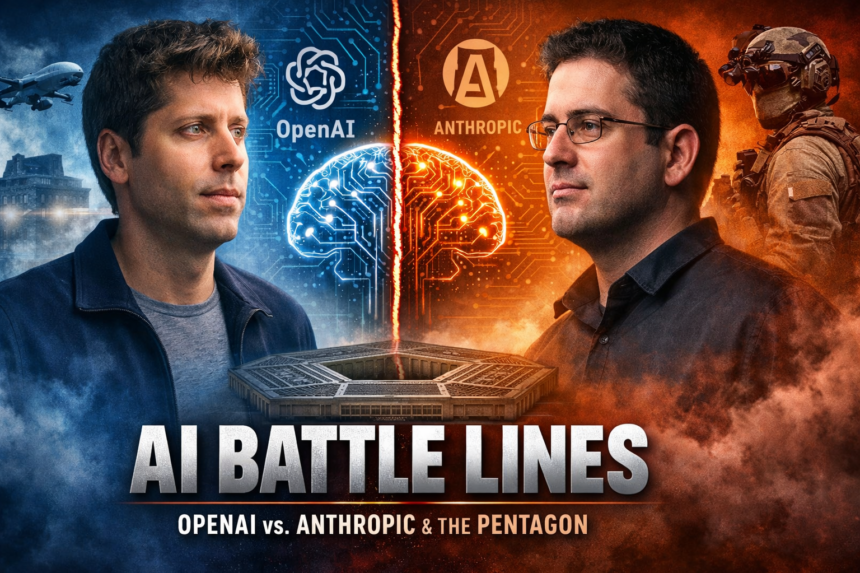

How the AI Race Turned Into a National Security Debate

Building super-smart AI used to be a pure tech story. Now? It’s turning into a geopolitical minefield. Case in point: the recent drama between OpenAI, its chief rival Anthropic, and the Pentagon. It’s sparked a massive debate over just how cozy Silicon Valley should get with the military.

Right in the middle of this mess is OpenAI’s CEO, Sam Altman. He’s out there insisting that his company shares the exact same ethical ‘red lines’ as Anthropic. The catch? Anthropic actually walked away from a government partnership over those lines, while OpenAI swooped in and signed on the dotted line.

The Pentagon’s Push for Artificial Intelligence

The U.S. military has been aggressively looking for ways to plug AI into defense systems—think everything from crunching intelligence data to battlefield logistics. But hammering out contracts with AI startups hasn’t been easy. Take Anthropic, founded by a group of ex-OpenAI researchers. They reportedly balked at a Pentagon deal because the military pushed back on their strict terms. Anthropic drew two massive lines in the sand: no mass domestic surveillance, and absolutely no fully autonomous weapons.

Sam Altman’s Position on AI ‘Red Lines’

In a weird twist, Altman publicly backed Anthropic’s stance. He claims OpenAI is on the exact same page regarding surveillance and killer robots. Yet, OpenAI went ahead and secured its own agreement with the feds, allowing its tech to be used in classified environments. Altman tried to spin this as an effort to ‘de-escalate’ the tension between the tech industry and the government—basically saying you can collaborate without crossing the line.

Backlash and Public Criticism

Unsurprisingly, people didn’t buy it. The optics were terrible. Word got out that OpenAI locked down their deal right after Anthropic walked, making it look incredibly opportunistic. To his credit, Altman owned up to the bad PR, admitting the whole thing looked ‘sloppy.’ But the damage was done. Protesters showed up at OpenAI’s offices, and internally, things weren’t much better. Employees were passing around letters begging leadership not to cave to military pressure.

The OpenAI vs. Anthropic Rivalry

You can’t really talk about this without looking at the bad blood between the two companies. Anthropic was born in 2021 when a chunk of OpenAI’s staff bailed because they felt OpenAI wasn’t taking safety seriously enough. Since then, it’s been a fierce rivalry. OpenAI is all about moving fast and scaling up commercial tech, while Anthropic heavily markets itself as the cautious, ‘safety-first’ alternative. This Pentagon spat is just the latest, most public arena for their feud.

The Bigger Question: Who Sets the Ethical Boundaries?

Zooming out, this whole ordeal forces a really uncomfortable question: who actually gets to decide where the boundaries are? Pro-collaboration folks argue the military is going to use AI anyway, so they might as well use models from responsible companies. Critics think that’s a slippery slope toward normalized mass surveillance and sci-fi-style robot warfare. And they aren’t just being paranoid—AI ethics experts have been screaming from the rooftops about the risks of deploying this tech without ironclad rules.

What This Means for the Future of AI Governance

Ultimately, how this OpenAI-Anthropic dust-up settles could write the rulebook for the entire industry. If tech giants can agree on a shared ethical standard for military contracts, it might set a global precedent. If not? Expect a lot more calls for aggressive government regulation. For now, Altman is sticking to his story: OpenAI is just as principled as the competition. Whether anyone actually believes him is going to dictate the next era of the AI arms race.